Application of AI and deep learning technology for IPE education under dual track cultivation model

Datasets collection, experimental environment and parameters setting

The dataset selected for the experiment is an online education dataset, a widely used public dataset in educational research. It contains rich data on learning behaviors and educational activities, making it highly suitable for analyzing educational effectiveness and learning patterns. The dataset includes platform course data, learning records, class data, class member information, learning logs and browsing history, totaling approximately 12 million data entries. The selected dataset mainly includes course learning records, user behavior logs, and interaction data, which can effectively reflect students’ level of engagement, cognitive habits, and emotional attitudes in theoretical learning. These behavioral characteristics are closely related to the learning enthusiasm, cognitive internalization, and value recognition that are concerned in ideological and political education. In addition, the multidimensional behavioral indicators in the dataset (completion rate, interaction frequency, and task response time) provide a reliable data foundation for the theoretical evaluation process in the “theory practice” dual-track model proposed here. This can support quantitative analysis of vocational college students’ ideological and political awareness and behavioral performance.

Table 5 provides detailed information about the dataset.

The dataset is available for download on the Alibaba Cloud Tianchi Open Platform website (https://tianchi.aliyun.com/).

In the data preprocessing stage, this work cleans and organizes the original dataset, including using the adjacent period mean filling method to process missing values, and identifying and removing outliers using box plots and Z-score methods. To ensure that the sample can represent the typical behaviors of vocational college students in the context of ideological and political education, this work also conducts basic distribution tests (including gender, grade, learning period, etc.), and adopts a random sampling strategy to balance the quantitative differences between different groups, thereby ensuring the reliability and generalization ability of subsequent experimental analysis.

To ensure the smooth progress of the research, experiments are conducted in a high-performance hardware environment:

-

(1)

GPU (Graphics Processing Unit): NVIDIA Tesla V100.

-

(2)

CPU (Central Processing Unit): Intel Xeon E5-2698 v4.

-

(3)

Memory: 128GB.

-

(4)

Storage: 1 TB.

-

(5)

Network: 1Gbps.

By using the above configuration, an efficient experimental environment can be established, ensuring optimal performance and results during the training and evaluation of deep learning models. The experimental parameters for the model are uniformly set as follows: the number of hidden units in each layer is 128, with a total of 3 layers. The learning rate is set to 0.01, the batch size to 64, and the regularization parameter to 0.001. The model includes 3 convolutional layers, each with 32 filters of size 3 × 3, and pooling layers with a window size of 2 × 2. The 128 hidden units are capable of capturing rich features without significantly increasing computational complexity. A lower number of hidden units is suitable for small-scale educational data, while a higher number can better handle more complex educational contexts, ensuring effective modeling of diverse learning behaviors. A depth of three layers is sufficient to capture the hierarchical features of the data while avoiding overfitting issues associated with deeper models. For educational data, this moderate number of layers can adapt to varying data sizes and complexities, handling both single-dimensional data (like student grades) and multi-dimensional data (like course interactions and behaviors). A learning rate of 0.01 is commonly used as the initial value, striking a balance between fast model convergence and avoiding local minima due to overly rapid convergence. Larger learning rates are suitable for initial parameter adjustments, while a learning rate decay strategy can be implemented for optimization in different educational scenarios. A batch size of 64 is a typical medium value, balancing training speed and model stability. For smaller datasets, a smaller batch size (like 32) ensures more stable parameter updates, while for large datasets, the batch size can be increased based on available memory resources. The regularization parameter controls model complexity and prevents overfitting. In educational data, where data volume and quality vary across different educational backgrounds, a moderate regularization parameter can effectively improve the model’s generalization ability across diverse data types. Three convolutional layers can extract low-level features and combine multi-layer feature representations to learn behavior patterns. In the educational context, different types of data (such as behaviors and content) require specific feature extraction strategies. Three convolutional layers are adequate for modeling multi-dimensional data features. The use of 32 filters can extract key features without significantly increasing computational costs. The number of filters can be dynamically adjusted based on data complexity, with additional filters introduced for more complex interaction data to improve feature extraction. A filter size of 3 × 3 is commonly used in convolutional networks and is effective in extracting local features. For educational data, such as learning behavior sequences, a 3 × 3 filter is sufficient to capture local patterns, making it suitable for various educational scenarios. The 2 × 2 pooling window effectively reduces the feature map size and helps prevent overfitting. For large datasets, this reduces computational complexity, while for smaller datasets, it preserves sufficient feature information, adapting to multiple educational data contexts.

The comparative models used in the experiment are Bidirectional Encoder Representations from Transformers (BERT) and A Robustly Optimized BERT Pretraining Approach (RoBERTa). BERT and RoBERTa are selected as baseline models mainly due to their broad representativeness and powerful performance in educational text analysis and understanding tasks. These two models belong to pure text models, while the proposed model belongs to multimodal models. This comparison aims to verify whether the optimized model has basic advantages and generalization ability on text-based educational data. BERT utilizes a bidirectional Transformer model that considers both left and right contextual information. It is pre-trained using the Masked Language Model and Next Sentence Prediction tasks. BERT has demonstrated excellent performance across various Natural Language Processing tasks, including question-answering systems, sentiment analysis, and named entity recognition. RoBERTa, an improved version of BERT proposed by Facebook, optimizes the pre-training process and increases training data to further enhance model performance. RoBERTa removes the Next Sentence Prediction task, uses more data, and trains for a longer period. Additionally, it dynamically generates masks in each epoch to enhance model robustness.

Performance evaluation

Performance evaluation of optimized model

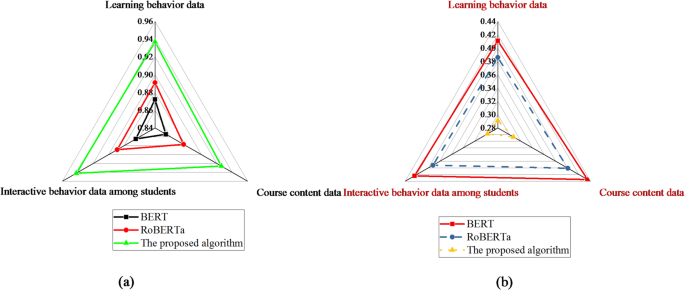

In the performance evaluation experiment, this work first compares the Area Under the Curve – Receiver Operating Characteristic (AUC-ROC) and logarithmic loss (Log Loss). Figure 3 displays the experimental results.

Performance evaluation (a) AUC-ROC (b) Log Loss.

Figure 3 suggests that under the AUC-ROC metric, the optimized model outperforms both BERT and RoBERTa across all data types. For instance, in learning behavior data, the AUC-ROC of the optimized model is 0.937, compared to 0.891 for RoBERTa. This indicates that the optimized model has a significant advantage in distinguishing between positive and negative samples, particularly in student interaction behavior data, where it performs exceptionally well with an AUC-ROC of 0.942. The optimized model also demonstrates a lower Log Loss value. For example, in learning behavior data, the Log Loss is 0.291, compared to 0.386 for RoBERTa. This suggests that the optimized model provides more accurate probabilistic predictions, with higher confidence levels in predictions related to course content and interaction behavior data. Figure 4 presents a comparison of Root Mean Square Error (RMSE) and Mean Reciprocal Rank (MRR).

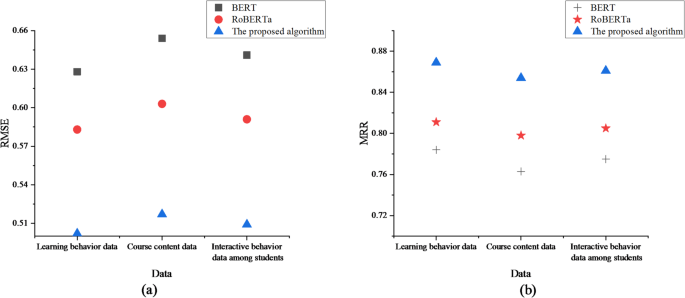

Performance comparison results (a) RMSE (b) MRR.

Figure 4 shows that, under the RMSE metric, the optimized model significantly outperforms BERT and RoBERTa, with a notably lower error. For example, in student interaction behavior data, the RMSE of the optimized model is 0.509. This indicates that the optimized model has higher accuracy in regression tasks, particularly demonstrating lower prediction errors in learning behavior data and course content data. In terms of the MRR metric, the optimized model performs the best across all data types. For instance, in course content data, the MRR reaches 0.854. This suggests that the optimized model exhibits stronger capabilities in relevance ranking tasks, especially in learning behavior data, where it can better prioritize learning resources.

To further validate the performance of the model, this work also selects three classic multimodal models, Visual Linear BERT (VL-BERT), VisualBERT, and Learning Cross-Modal Encoder Representation from Transformers (LXMERT), for comparison under the same data and evaluation metrics. These models all have the ability of image-text joint understanding, which is more comparable to the proposed model in terms of input form and target task, and can further objectively reflect the performance of the proposed model in real multimodal education scenarios. Table 6 lists the detailed results of all comparative models on various indicators.

The results in Table 6 show that the proposed model outperforms the comparison model in four indicators: AUC-ROC (0.937), Log Loss (0.291), RMSE (0.509), and MRR (0.854). The RMSE indicator is 10.8% lower than that of the optimal LXMERT model, and the Log Loss indicator is 8.5% lower, indicating that the proposed model has improved the prediction accuracy and stability of the model. This comparison fully demonstrates that the proposed model has good joint understanding ability and comprehensive performance in multimodal education scenarios.

Evaluation of the effectiveness of the dual-track cultivation model

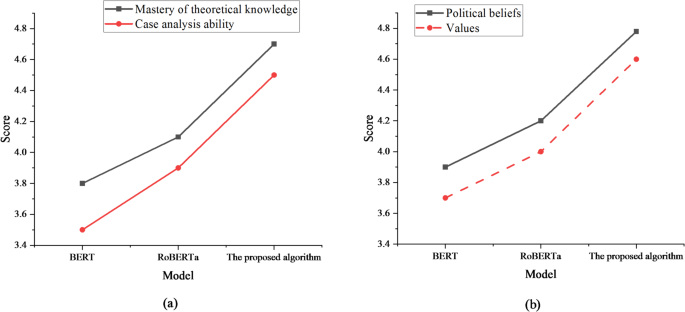

For evaluating the effectiveness of the dual-track cultivation model, a scoring system (1–5 points) is used, where higher scores represent better performance and evaluation of the indicator. This scoring system is designed based on the course objectives and learning behavior data analyzed by AI models, including multiple dimensions such as knowledge mastery, belief identification, and practical participation. The sample of participants in the evaluation includes a total of 320 students from two grades, as well as 8 ideological and political teachers. All evaluators have received consistency training on the meaning of indicators and evaluation criteria before evaluation to ensure the effectiveness and comparability of the evaluation. Moreover, the scoring results are cross-validated with the learning behavior analysis data output by the AI model (such as knowledge weakness identification, and interactive participation). This thereby integrates objective data support into subjective evaluation, enhancing the interpretability of the results and AI relevance. Figure 5 presents the results of the scores for mastering ideological and political knowledge and political consciousness.

Mastery of ideological and political knowledge and evaluation results of ideological and political consciousness (a) Mastery of ideological and political knowledge (b) Ideological and political consciousness.

Figure 5 suggests that in terms of scores for mastering ideological and political knowledge, the optimized model achieves scores of 4.7 and 4.5. The “theoretical knowledge mastery” of 4.7 points has been improved compared to the traditional models BERT (3.8) and RoBERT (4.1), reflecting the advantage of the optimized model in knowledge transfer efficiency. In comparison, while the BERT and RoBERTa models perform well in these dimensions, they do not reach the level of the optimized model. Regarding political consciousness, in the political belief scoring, the BERT model scores 3.9, showing stable performance. The RoBERTa model scores 4.2, slightly higher than BERT. The optimized model scores the highest at 4.8, significantly outperforming both BERT and RoBERTa. In the value belief scoring, the BERT model scores 3.7, which is average; the RoBERTa model scores 4.0, better than BERT; the optimized model scores 4.6, markedly higher than both BERT and RoBERTa, demonstrating its outstanding performance in value cultivation. Figure 6 presents the scoring results for ideological and political practice ability and student satisfaction.

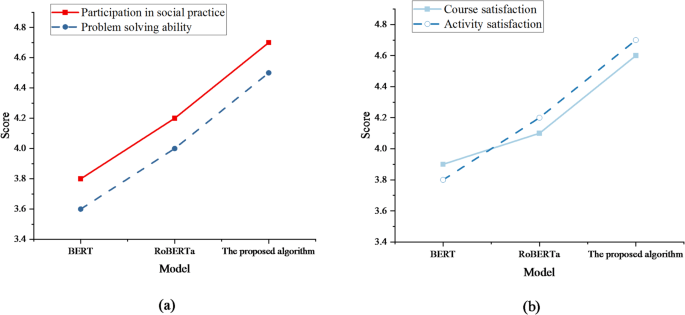

The results of ideological and political practice ability and student satisfaction (a) Ideological and political practice ability (b) Student Satisfaction.

Figure 6 reveals that the optimized model demonstrates significant advantages in both social practice participation and problem-solving abilities, with scores reaching 4.7 and 4.5, respectively. The “Social Practice Participation” score of 4.7 is based on a comprehensive evaluation of multiple indicators including completion, quality of practice reports, and mentor evaluation. The participation sample includes 320 students, reflecting the effectiveness of the model in practical guidance from a data perspective. These rating results indicate that the model’s performance has improved in all aspects. This indicates that the proposed optimization method can effectively enhance students’ ideological and political literacy in practical applications, demonstrating high practical value. It provides valuable insights and methods for IPE in vocational colleges. Regarding student satisfaction, the optimized model achieves the highest course satisfaction score of 4.6, and activity satisfaction also reaches 4.7. This suggests that the proposed optimization method can effectively improve overall student satisfaction in practical applications.

To further objectively evaluate the effectiveness of the practical teaching process, this work explores the effectiveness of the proposed model in actual teaching. Two classes (experimental group of 20 students and control group of 20 students) were selected from a certain university in a certain semester. The experimental group adopted the optimized model, while the control group continued to use traditional practical teaching methods. The evaluation indicators include the completion rate of students’ participation in social practice, the quality rating of practice reports, and the evaluation of practical performance by mentors. The final evaluation results are shown in Table 7.

Table 7 shows that the experimental group performs better than the control group in various evaluation indicators, with a 13% increase in the completion rate of social practice participation, a 13 point increase in the average score of practical reports, and an increase of 0.7, 1.0, and 0.5 points in mentor evaluation, problem-solving ability, and team collaboration performance, respectively. This indicates that the optimized model can effectively improve students’ practical participation, report quality, and comprehensive ability performance, and is more prominent in problem-solving ability.

Discussion

The experimental results demonstrate that the optimized model proposed shows significant advantages across all four metrics: AUC-ROC, Log Loss, RMSE, and MRR. Specifically, in classification tasks, the optimized model effectively distinguishes between positive and negative samples and exhibits higher precision in probability prediction. In regression tasks, the optimized model provides lower prediction errors, demonstrating stronger generalization ability. Moreover, in ranking tasks, the optimized model accurately ranks relevance, showcasing superior learning resource recommendation capabilities. In contrast, although BERT and RoBERTa show relatively stable performance across the metrics, neither of them reaches the level achieved by the optimized model. This indicates that the proposed optimized model offers greater applicability and reliability across multidimensional tasks. By combining deep learning techniques with the characteristics of educational data, the optimized model has demonstrated substantial potential in practical educational scenarios. Future work could focus on expanding the model’s scope and enhancing its performance across diverse educational contexts.

In evaluating the dual-track cultivation model, the optimized model demonstrates significant advantages in theoretical knowledge acquisition. This result suggests that the optimized model is more efficient in course design and knowledge transfer, enabling students to grasp ideological and political theory more comprehensively. The optimized model also excels in enhancing students’ ideological and political awareness. Through optimized educational methods and personalized guidance, students’ recognition of national political systems and core socialist values significantly improves. Additionally, the scores for student participation in social practice and problem-solving abilities are significantly higher with the optimized model. This indicates that the optimized model not only enhances theoretical knowledge but also effectively applies this knowledge to practice, developing students’ problem-solving skills. In terms of student satisfaction, the optimized model also shows significant advantages. Both course satisfaction and activity satisfaction scores are higher with the optimized model compared to BERT and RoBERTa.

link