Modelling of hybrid deep learning framework with recursive feature elimination for distributed denial of service attack detection systems

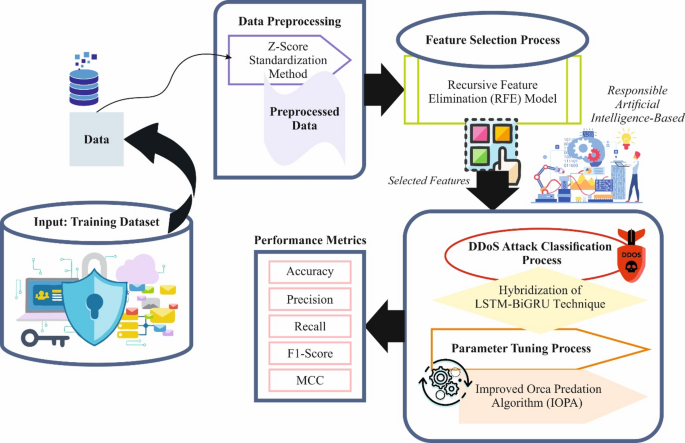

In this study, the RAIHFAD-RFE model was proposed for cybersecurity systems. The study aimed to analyse and propose efficient cybersecurity strategies for detecting, mitigating and preventing DDoS attacks using advanced techniques. The model comprises data pre-processing, feature selection, attack classification and parameter tuning. Figure 2 illustrates the workflow of the RAIHFAD-RFE method.

Work flow process of the RAIHFAD-RFE model.

Pre-processing using Z-score

As a primary step, the RAIHFAD-RFE technique utilises the Z-score standardisation method for the data pre-processing stage to clean, transform and organise raw data into a structured format37. This technique was chosen for its efficiency in normalising features by centring data around a mean of zero and a standard deviation of one. It is specifically beneficial when features have varying scales; it ensures that each feature contributes equally to the learning process. The model is less sensitive to outliers, making it more robust for real-world network traffic data and is efficient in convergence speed and stability of gradient-based optimisation methods used in DL techniques, such as long short-term memory (LSTM) and bidirectional gated recurrent unit (BiGRU). This standardisation technique also helps prevent the model from being biased towards features with larger numerical ranges. Moreover, Z-score normalisation is widely applicable and consistent across datasets, thus enhancing generalisation.

The proposed model adjusts the features by subtracting the mean and then dividing them by the standard deviation, resulting in a standard deviation of 1 and a mean of 0. It is effective for models that typically assume distributed input features, such as logistic and linear regression. The z-score normalisation for feature \(\:x\)‘ is computed utilising the following equation:

$$\:{x}^{{\prime\:}}=\frac{x-mean\left(x\right)}{std\left(x\right)}\:\:$$

(1)

Here, \(\:{x}^{{\prime\:}}\) depicts the normalised value, \(\:x\) indicates the original value, \(\:std\left(x\right)\) refers to the standard deviation of \(\:x\) and mean \(\:\left(x\right)\) denotes the average feature \(\:x\). The other normalisation models include the interquartile range (IQR), which depicts the extent of statistical dispersion, denoting how spread out the data is. IQR is measured by the difference between the 75th and 25th percentiles. The quartiles are described as Q1 (lower quartile), Q2 (median), and Q3 (upper quartile); here, Q1 and Q3 are equivalent to the 25th and 75th percentiles. The following equation specifies the IQR:

Selecting a proper normalisation model plays an essential role in enhancing the performance of the LSTM-BiGRU method. Normalising input variables to a common scale might enhance the efficacy of learning models and improve the accuracy of predictions. Since a diverse normalisation model manages data scales and outliers, the selection of models can significantly influence how effectively the techniques acquire patterns in data. Determining the most appropriate methodology can necessitate empirical assessment or insights from preceding analysis utilising comparable datasets and DL frameworks.

Dimensionality reduction procedure

The RFE model is employed for the FS process to recognise and preserve the most significant features for increasing the model’s performance38. This model was chosen for its capability in systematically selecting the most relevant features by recursively removing the least significant ones based on model performance. This method relies solely on statistical measures and considers feature importance within the learning algorithm, resulting in a more informed selection. It effectually mitigates dimensionality, which decreases overfitting and improves computational efficiency. Compared to embedded methods, RFE presents greater flexibility in pairing with diverse models. Its iterative nature ensures that optimal feature subsets are detected for improved model accuracy. RFE is particularly suitable for complex tasks, such as DDoS detection, where eliminating irrelevant features significantly enhances performance.

RFE is one of the FS approaches employed for recognising the essential features in a dataset by iteratively extracting less related aspects, depending on their performance. In this study, the datasets comprised higher-dimensional data, and RFE is specifically beneficial for reducing redundancy and enhancing the efficacy of ML techniques. To select only the most crucial features, RFE reduces computational overhead, creating methodologies that are more interpretable and faster, enhances precision and handles higher dimensions. Intrusion detection datasets frequently have a great number of attributes. RFE guarantees that only effectual aspects are retained. RFE is employed to pre-process and scale datasets for selecting the most substantial elements before training ML methodologies, such as RF, decision tree and logistic regression.

A base estimator was employed to assess significant features using an underlying technique. For instance, RF offers the significance of feature scores, depending on its DT. Primarily, the methodology is trained on the entire set of features. Assume that \(\:X\) is the input feature matrix, \(\:y\) indicates targeted labels, and \(\:M\) signifies the ML technique employed in RFE. The significance of feature scores for the \(\:ith\) feature is specified as follows:

$$\:{I}_{i}=Importance\:of\:feature\:{x}_{i}\:as\:determined\:by\:M$$

(3)

The least significant features were eliminated iteratively. This procedure repeats until the chosen feature count \(\:k\) is designated. Let \(\:{X}^{\left(T\right)}\) depict the feature matrix at iteration \(\:t\). At all iterations, training \(\:M\) on \(\:{X}^{\left(T\right)}\) to calculate significant scores. Eliminate \(\:r\) features with the least significant scores:

$$\:{X}^{(t+1)}={X}^{\left(t\right)}\setminus\:\left\{{x}_{1},\:{x}_{2},\dots\:,{x}_{n}\right\}$$

(4)

Now \(\:\{{x}_{1},\:{x}_{2},\:\dots\:,{x}_{n}\}\) refers to less significant features. The procedure halts after the recollected feature counts achieve the preferred number \(\:k\), halt

$$\:if\left|{X}^{\left(\tau\:+1\right)}\right|=k$$

(5)

The chosen features are employed for training the final model \(\:{M}_{final}\):

$$\:{M}_{final}=Train\left(M,\:{X}^{\left(T\right)},\:y\right)$$

(6)

Once features are selected using RFE, datasets with reduced features are employed to train intrusion detection techniques, enhancing their computational efficacy and prediction accuracy. RF and DT classifiers were employed as the base techniques for RFE to effectively use their ability.

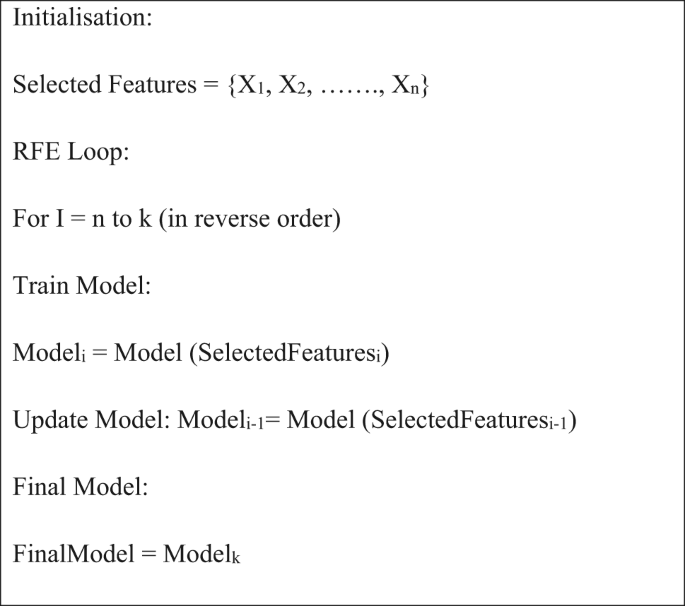

Algorithm 1: Pseudocode of RFE

Hybridisation of DDoS attack classification

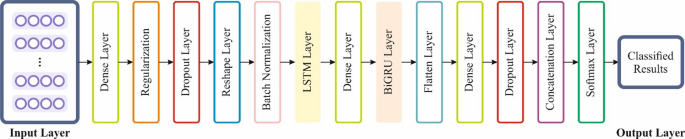

For the DDoS attack classification procedure, the RAIHFAD-RFE model implements hybridisation of the LSTM-BiGRU technique39. This hybrid model was chosen to employ the merits of both architectures in handling sequential network traffic data. LSTM outperforms at capturing long-term dependencies, while BiGRU processes data in both forward and backward directions for better context understanding. The capability of the model is improved by this integrated model for detecting complex and evolving attack patterns compared to standalone RNNs or CNNs. Unlike conventional ML models, hybrid DL models adapt better to temporal dynamics. It also enhances accuracy, robustness, and generalisation in imbalanced or noisy datasets. Overall, the hybrid model provides a more reliable and efficient solution for DDoS detection. Figure 3 specifies the framework of the LSTM-BiGRU model.

Structure of the LSTM-BiGRU technique.

Generally, LSTM networks are efficient in predicting and modelling time-series data by presenting output, input, and forget gates. These gates help alleviate the gradient vanishing problems and gradient explosion to some extent. The forget gate, signified by \(\:{f}_{t}\), controls whether the data must be forgotten. The input gate controls which novel information is added to the memory cell. The output gate, denoted as \(\:{O}_{t}\), limits the output of the hidden layer (HL) vector. The reliable equations are presented in Eq. (7) to (12).

$$\:{f}_{t}=\sigma\:\left({W}_{f}\left[{h}_{t-1},\:{x}_{t}\right]+{b}_{f}\right)$$

(7)

$$\:{i}_{t}=\sigma\:\left({W}_{i}\left[{h}_{t-1},{x}_{t}\right]+{b}_{i}\right)\:\:$$

(8)

$$\:{o}_{t}=\sigma\:\left({W}_{o}\left[{h}_{t-1},{x}_{t}\right]+{b}_{o}\right)\:$$

(9)

$$\:{\stackrel{\sim}{C}}_{t}=\text{t}\text{a}\text{n}\text{h}\left({W}_{c}\left[{h}_{t-1},{x}_{t}\right]+{b}_{c}\right)\:$$

(10)

$$\:{C}_{t}={f}_{c}\odot\:{C}_{t-1}+{i}_{t}{\stackrel{\sim}{C}}_{t}\:\:$$

(11)

$$\:{h}_{t}={O}_{t}\odot\:\text{t}\text{a}\text{n}\text{h}\left({C}_{t}\right)\:$$

(12)

Whereas: \(\:{x}_{t}\) denotes input at time step \(\:t;{h}_{t}\) refers to HL at time step \(\:t;{\stackrel{\sim}{C}}_{t}\) represents candidate cell state at time step \(\:t\); \(\:{C}_{t}\) signifies upgraded cell state at time step \(\:t;{W}_{f},\) \(\:{W}_{i},\) \(\:{W}_{o}\), and \(\:{W}_{c}\) designate the weighted matrices equivalent to every module; \(\:{b}_{f},\) \(\:{b}_{i},\) \(\:{b}_{o}\), and \(\:{b}_{c}\) represents bias matrices akin to every module; \(\:\sigma\:\) characterises the activation function of the Sigmoid; and \(\:\odot\:\) means Hadamard product.

Additionally, BiGRU is a neural network that incorporates a bidirectional GRU and RNN. Compared to conventional GRUs, RNNs better address the issues of explosion and gradient vanishing while capturing longer-term dependencies in sequences. The bidirectional RNN also increases the method by handling either past or future inputs, allowing improved sequence data processing. BiGRU handles data sequences by initially passing the input sequence through dual GRU networks, one in the forward direction and the other in the backward direction. The outputs from either direction are then connected to make the final output. Additionally, BiGRU is primarily beneficial in capturing dependencies within sequences, as it can consider either previous or future information. Therefore, adopting the BiGRU method to address the related intrusion of these features will enhance prediction precision by reducing the model’s error. The essential elements of a GRU consist of updates and reset gates that control the upgrading and use of the HL over nonlinear transformations. The consistent equations are presented in Eqs. (13) to (16).

$$\:{r}_{t}=\sigma\:\left({W}_{r}{x}_{t}+{U}_{r}{h}_{t-1}+{b}_{r}\right)\:$$

(13)

$$\:{z}_{t}=\sigma\:\left({W}_{Z}{x}_{t}+{U}_{z}{h}_{t-1}+{b}_{Z}\right)\:\:$$

(14)

$$\:{h}_{t}^{*}=\text{t}\text{a}\text{n}\text{h}\left({W}_{h}{x}_{t}+{r}_{t}{U}_{r}{h}_{t-1}+{b}_{h}\right)\:\:$$

(15)

$$\:{h}_{t}=\left(1-{z}_{t}\right){h}_{t}^{*}+{z}_{t}{h}_{t-1}\:$$

(16)

Here, \(\:{r}_{t}\:\)and \(\:{z}_{t}\) denote reset and update gates; \(\:\text{t}\text{a}\text{n}\text{h}\) represents the activation function of the hyperbolic tangent; \(\:{h}_{t}^{*}\) signifies candidate HL at the time step\(\:{;\:W}_{r},\) \(\:{W}_{Z}\), and \(\:{W}_{h}\) symbolise the weighted matrices for all modules; and \(\:{b}_{r},\) \(\:{b}_{Z}\), and \(\:{b}_{h}\) illustrate bias matrices for all modules.

IOPA-based hyperparameter tuning model

To further optimise model performance, the IOPA is utilised for hyperparameter tuning to ensure that the best hyperparameters are chosen for enhanced accuracy40. This model was selected for its superior balance between exploration and exploitation, which assists in avoiding local optima more effectively than conventional methods, such as grid search or GAs. The model performs efficient searching of the hyperparameter space, resulting in faster convergence and improved optimisation. Compared to other metaheuristic algorithms, it requires fewer iterations to achieve better performance, making it a computationally efficient approach. This results in improved model accuracy and robustness, especially crucial for complex architectures like the hybrid LSTM-BiGRU used in DDoS attack detection. Overall, IOPA presents a powerful and efficient approach for fine-tuning model parameters in dynamic network environments.

The orca predator algorithm (OPA) replicates the foraging behaviour of orcas (killer whales). The foraging tactic of the individual consists of three phases: attacking, driving, and surrounding prey. The presented model has improved the parameters for surroundings and drives for striking a balance between exploitation and exploration. During the attack phase, the best solution is recognised without offering the particle categories in consideration of numerous optimal orcas (candidates) in addition to those designated randomly. The presented OPA model is numerically described as follows:

1. The initial step is to assemble a group of orcas. The model recommends using \(\:{N}_{n}\) individuals, all of whom are located in different dimensional areas. This process is verified by the succeeding Eq. (17):

$$\:X=\left[x1,\:x2,\:x3,\:\dots\:,\:x{N}_{n}\right]=\left[\begin{array}{cccc}{X}_{\text{1,1}}&\:{X}_{\text{1,2}}&\:\cdots\:&\:{X}_{1,Dim}\\\:{X}_{\text{2,1}}&\:{X}_{\text{2,2}}&\:\cdots\:&\:{X}_{2,Dim}\\\:\vdots &\:\vdots &\:\vdots &\: \vdots\\\:{X}_{{N}_{n},1}&\:{X}_{{N}_{n},2}&\:\cdots\:&\:{X}_{{N}_{n},Dim}\end{array}\right]\:\:$$

(17)

Whereas, the population candidate solution is represented by \(\:X\). \(\:x{N}_{n}\) establishes the \(\:{N}^{th}\) candidate location. Dim has portrayed the population size.

-

1.

2. The second step is the chasing stage, which has two sub-steps: driving and encircling. The variable \(\:{p}_{1}\) is used to improve the probability of individuals following these dual stages. Two conditions determine the choice between using the encircling or driving process. When the random number is improved, the driving process should be used for p1. Alternatively, the encircling process should be applied.

-

2.

3. The third step is the driving procedure, which is crucial for ensuring that group members maintain their primary position and remain close to the prey. The objective is to prevent individuals from travelling apart from their goals.

$$\:{V}_{chase,1,i}^{t}=a\times\:\left(d\times\:{x}_{best}^{t}-F\times\:\left(b\times\:{M}^{t}+c\times\:{x}_{i}^{t}\right)\right)\:\:$$

(18)

$$\:{V}_{chase,2,i}^{t}=e\times\:{x}_{best}^{t}-{x}_{i}^{t}\:\:$$

(19)

Whereas, the iterations’ numbers are represented by \(\:t\). \(\:{V}_{chase,1,\:i}^{t}\) and \(\:{V}_{chase,2,}^{}\) specify the chasing speed following the choice of the first and second stages. The random amounts consist of \(\:d\) and \(\:b\), which are in the interval of \(\:(0\),1), and \(\:e\) signifies stochastic numbers that are in the range (\(\:0\),2). For chasing tactic selection, \(\:q\) is applied that varies among\(\:\:(0\),1), and the \(\:F\) value equivalents two. \(\:M\) represents the orca population’s mean position.

$$\:M=\frac{{\sum\:}_{i=1}^{{N}_{n}}{x}_{i}^{t}}{{N}_{n}}\:\:$$

(20)

In this context, there are two different methods for chasing that depend significantly on the population size. The 1 st model is applied if \(\:rand>q\), and the 2nd model is applied if \(\:rand\le\:q\).

$$\:\left\{\begin{array}{l}{x}_{chase,1,i}^{t}={x}_{i}^{t}+{V}_{chase,1,i}^{t}\:\:if\:rand>q\\\:{x}_{chase,2,i}^{t}={x}_{i}^{t}+{V}_{chase,2,i}^{t}\:\:if\:rand\le\:q\end{array}\right.\:\:$$

(22)

4. The fourth step is to surround the prey. Here, the development of candidates utilising three arbitrary individuals is defined in Eqs. (23) and (24):

$$\:{x}_{chase,3,i,k}^{t}={x}_{d1,k}^{t}+u\times\:\:\left({x}_{d2,k}^{t}-{x}_{d3,k}^{t}\right)\:\:$$

(23)

$$\:u=2\times\:\left(randn-\frac{1}{2}\right)\times\:\frac{{\text{M}\text{a}\text{x}}_{itr}-t}{{\text{M}\text{a}\text{x}}_{itr}}\:\:$$

(24)

Now, the variable \(\:{\text{M}\text{a}\text{x}}_{itr}\) exemplifies the maximal number of iterations. Candidates chosen at random are represented by \(\:1,\) \(\:d2\), and \(\:d3\), and they are not equal. If the third chasing tactic is selected, the state is specified by \(\:{x}_{ch\alpha\:se,3,i,k}^{t}.\).

5. The fifth step is to develop the surroundings of the victim, where every individual’s state has improved.

$$\:\left\{\begin{array}{ll}{x}_{chase,i}^{t}={x}_{chase,i}^{t}&\:if\:f\left({x}_{chase,i}^{t}\right)

(25)

Whereas the cost function is associated with \(\:{x}_{chase,\:i}^{t}\) was portrayed by \(\:f\left({x}_{chase,\:i}^{t}\right)\), and the function of cost, which is associated with \(\:{x}_{i}^{t}\) was established by \(\:f\left({x}_{i}^{t}\right)\).

6. The sixth step is to attack on the prey. The best four individuals are positioned in the top-four places. The candidates’ locations and their speed of movement during the attack are verified utilising the equations below:

$$\:{V}_{\alpha\:ttack,1,i}^{t}=\frac{({x}_{1}^{t}+{x}_{2}^{t}+{x}_{3}^{t}+{x}_{4}^{t})}{4}-{x}_{chase,i}^{t}\:\:$$

(26)

$$\:{V}_{\alpha\:ttack,2,i}^{t}=\frac{\left({x}_{chase,d1}^{t}+{x}_{chase,d2}^{t}+{x}_{chase,d3}^{t}\right)}{3}-{x}_{i}^{t}\:\:$$

(27)

$$\:{x}_{attack,i}^{t}={x}_{chase,i}^{t}+{g}_{1}\times\:{V}_{attack,1,i}^{t}+{g}_{2}\times\:{V}_{attack,2,i}^{t}\:\:$$

(28)

Next, the vector speed is proven by \(\:{V}_{attack\:2}^{}\) and \(\:{V}_{attack}^{}\) 1. The best individuals in the optimal positions are identified by \(\:{x}_{1}^{t},\) \(\:{x}_{2}^{t},\) \(\:{x}_{3}^{t}\), and \(\:{x}_{4}^{t}\). The three randomly chosen individuals are demonstrated by \(\:1,\) \(\:d2\), and \(\:d3\) to differ from each other. The state designated following the attacking stage is described by \(\:{x}_{attack,i}^{t}\). The variables \(\:{g}_{1}\) and \(\:{g}_{2}\) specify a random value within the interval of \(\:(0\), 2) and \(\:-2.5\) to 2.5.

7. The seventh step is the attack stage. The orcas’ positions were verified by the lower boundary \(\:\left(lb\right)\) problems.

As previously stated, the primary objective of this paper is to utilise OPA for optimal parameter selection. Selecting the best parameters is a complex task that involves numerous steps for the typical OPA to achieve accurate results and convergence rates in complex states. To address these difficulties, enhancements are made to increase the efficacy and robustness of the method, resulting in improvements in the IOPA. The establishment of the IOPA is the insertion of the removal stage. At the start of every iteration, the model tactically removes ineffective individuals to create space for unique individuals within the novel solution area. This new model significantly enhances the model’s ability to perform an exploration, enabling it to examine numerous pathways towards the optimal solution.

Eliminating the caught individuals in local ideals helps the model avoid suboptimal solutions and improves exploration in better ways. The characteristics of the IOPA technique are a dynamic area of exploration, frequently adding novel initial points. The adaptive feature enables the model to avoid getting caught in suboptimal solutions and expands its solution area. Removing the minimum efficient starting point’s assurances that computational sources are correctly concentrated on the more predictable regions within the solution area. The removal stage is an appropriate filter method that leads the model towards efficient solution spaces for exploration.

The projected developments offer various advantages, including improved precision and enhanced exploration capabilities. The IOPA examines different solutions by dynamically improving the solution area and giving new early points that reduce the probability of getting caught in local ideals. This model’s improved approach to its search method and exploration of dissimilar paths differentiates it from the standard OPA, making it particularly suitable for composite parameter identification challenges. Table 2 illustrates the comparative analysis of IOPA and advanced optimizers for hyperparameter tuning in DDoS detection. The key differences between IOPA and other optimizers such as Bayesian Optimization (BO) and covariance matrix adaptation evolution strategy (CMA-ES), particle swarm optimization (PSO), and genetic algorithm (GA) for DDoS tuning. It also highlights the faster convergence and better avoidance of local minima due to its iterative removal step, which slightly enhances computational overhead. This trade-off results in an enhanced accuracy, demonstrating the efficiency of the IOPA model for DDoS detection tasks.

The IOPA technique creates a fitness function (FF) to achieve greater performance in classification. It defines an affirmative number to characterise the boosted outcome of the candidate solutions. The minimisation of the classification error rate was deliberated as the FF, as given in Eq. (29).

$$\:fitness\left({x}_{i}\right)=Classifier\:Error\:Rate\left({x}_{i}\right)$$

$$\:=\frac{number\:of\:misclassified\:samples}{Total\:no\:of\:samples}\times\:100\:$$

(29)

link