To adopt or to ban? Student perceptions and use of generative AI in higher education

A notable proportion of the interviewees self-reported frequent use of digital devices and services (Table 2), as well as “excellent” (18.1%) or “good” (59.3%) digital literacy. The combined total of these two responses represents 77.4% of the sample. In contrast, only a negligible proportion of respondents (1.5%) indicated that they possessed inadequate digital competencies.

Because the data do not fully meet the assumptions of the normality test, a non-parametric statistical test was employed (i.e. the chi-square, α = 0.05), to assess the association between variables. To obtain an estimate of the strength of the association between variables (i.e. the effect size), the measure of association provided by Cramer’s V was used. The gender variable was found to be statistically significantly associated with digital literacy, χ² (6, N = 1,366) = 78.385, p = 0.000, ϕc = 0.169; the level of self-perceived digital literacy among females was markedly lower compared to other participants, particularly with respect to the most extreme position on the semantic scale, “excellent” digital skills (female: 8.1%; male: 25.5%; other: 21.7%). Furthermore, there was a slight, significant positive association between self-declaration of digital skills and increasing age. Respondents’ age was a continuous numeric variable and to cross-reference it with the other variables in the questionnaire, a non-parametric test was employed (i.e. Kendall’s Tau B). This was done out of caution due to the non-normal distribution of the data, as evidenced by the fact that three quarters of the sample was aged between 19 and 25 (Age * Self-declared digital literacy: N = 1,366; τb = 0.094, p = 0.000).

This bias in the convenience sample, which is inclined towards students with a high level of digital skills, can probably be attributed to self-selection: those who are more closely aligned with the research topic were more likely to engage with the survey, whereas those who are less interested may have been less inclined to participate. This prompts the initial, provisional consideration of the following point: the topic of AI appears to hold greater interest for students who, for reasons related to their educational field, are more attuned to the digital realm. This is noteworthy, particularly in view of the fact that, in coming years, GenAI technologies are likely to have a considerable influence on the future employment prospects of many students who do not pursue STEM subjects. One illustrative example is the field of foreign languages (which accounts for only 2.7% of the sample), a field that is likely to be significantly affected by this trend: a recent survey conducted by the Society of Authors revealed that over a third of translators have already lost work due to AI, and over four in ten translators reported a decline in income as a consequence of these technological advances (Creamer, 2024).

Current (and future) use of GenAI

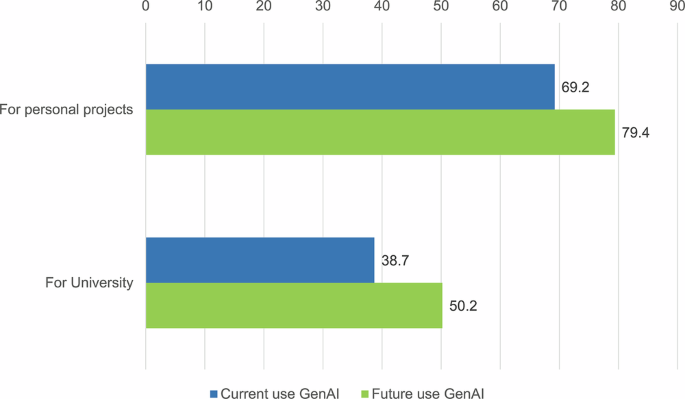

A majority of the sample (69.2%) have already used ChatGPT (or analogous resources) “for their personal projects, out of curiosity or just for fun”. This percentage is reduced by half when the question is focused on university-related tasks: “I use or have used ChatGPT to help me undertake assignments or exams” (38.7%). When future intentions were considered, the findings remained consistent, with a notable increase in the number of individuals who expressed plans to use these resources in the future. Half of the interviewees indicated that they intended to employ AI for academic purposes in the future (Fig. 1).

Declaration of (current and future) use of ChatGPT (or similar resources) for personal or university projects (%).

The data indicate that there is a gender difference in the use of ChatGPT, with a disparity of 24.8% in favour of males compared to females regarding current use of AI for personal projects (male: 79.7%; female: 54.9%; other: 78.3%) and of 17.3% for current use of AI for academic tasks (male: 46.1%; female: 28.8%; other: 39.1%). The association between gender and use is statistically significant (Table 3). Age is significantly negatively correlated with the use of AI. As the age of the interviewees increases, the use of AI tends to decrease, both for personal activities and for university-related tasks (Table 3).

To assess the correlation between digital skills and the use of ChatGPT, a digital competence index was constructed by summing the values of the responses for the self-declaration of digital skills with those relating to the use of smartphones, personal computers and search engines (the digital devices and services most used by the sample). This continuous index has a minimum value of 8, a maximum 16, a standard deviation of 1.44695, a mean of 13.9605, a median of 14 and a mode of 15. The standard deviation is low because the mean, median and mode are very high (i.e. they are close to the maximum of the index). This is the effect of the sample tending to have a high level of digital literacy. The use of AI is positively correlated with the digital competence index, both for personal and university-related use (Table 3).

The overall data reveal a discrepancy, amounting to 30.5%, between the declared use of AI for personal projects and that for academic purposes. Nearly one-third of the sample asserted that they used AI but indicated that they had not employed it for university-related tasks. It is challenging to conceive that such a considerable proportion of students, despite their presumed familiarity with these technologies, would restrict their use to personal endeavours and avoid their integration into academic pursuits. As will be discussed later, the data from other questions suggest that at least some of this discrepancy could be attributable to a social desirability effect, whereby respondents may have been inclined to under-declare the use of AI for academic tasks.

A closer examination of the group of students who had used AI for their personal projects but not for academic purposes revealed a demographic profile that was predominantly male, concentrated in master’s and doctoral programmes, and primarily engaged in the fields of computer science and ICT, industrial and information engineering, and literature and the humanities. These respondents tended to view AI with greater “seriousness” than others: they were more likely to believe that professors are capable of discerning instances of AI being employed to fulfil academic responsibilities. They were also more inclined to view the use of AI for educational purposes as “morally wrong” and believe that such applications may undermine “the purposes of education”.Footnote 2

It is hypothesised that at least some of the responses provided by this 30.5% of students were not entirely truthful and may be influenced by: social desirability; a potential bias associated with the composition of the sample, given that the invitation to complete the questionnaire originated from an official university channel; a possible lack of trust in the actual anonymity of the answers; and an underlying doubt regarding the question: “can AI be used to perform academic tasks or is its use considered incorrect behaviour or a scam?” Indeed, at the time of the interviews, Italian universities were not yet regulating the use of ChatGPT or other LLMs. Even today, as of this writing, only a few Italian universities have adopted policies to do so.

How ChatGPT and similar tools are used to perform academic tasks

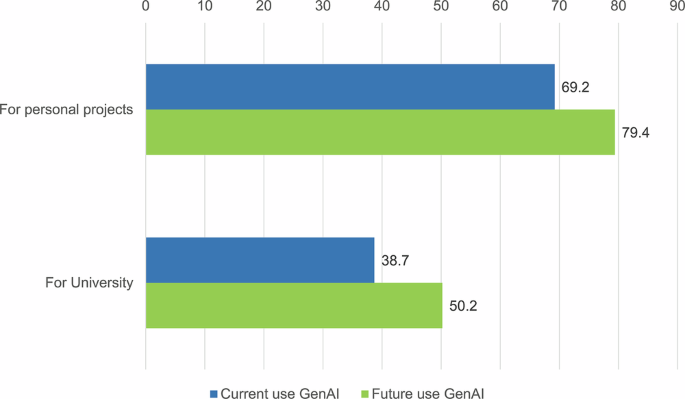

Our suspicions were confirmed by the responses to the question “how do you usually use ChatGPT (or analogous resources) to perform a task, complete an exam or accomplish another university-related activity?” Most students who used ChatGPT for academic tasks said they did so “to perform only some parts of the work and do the rest on their own” or “to perform most of the task but making changes when needed” (Fig. 2). Only a few students used AI to do the assignment, delivering it “without checking it or making changes”.Footnote 3 This evidence lends support to the idea of social desirability inherent in the responses (“I use it, but I do not declare that I have used it”).

How ChatGPT (or a similar tool) has been used to perform university tasks (%).

However, the most significant finding was that the collective responses to the various applications of ChatGPT in an academic context yielded data that diverge from the direct question previously discussed. The total number of individuals who, in response to this prompt, indicated they had used ChatGPT for academic purposes accounted for 48.8% of the sample, representing a notable increase from the 38.7% observed with the preceding inquiry.

In light of the responses to this question, it can be suggested that 48.8% of respondents (10.1% more than the previous direct question) “admitted” to using GenAI for academic purposes. This measure was therefore deemed to be more realistic than the previous one. It can thus be concluded that, at the time of the interviews, around half of the Italian university students were already employing GenAI to some extent to facilitate their academic endeavours.

By isolating the 164 students who initially denied using AI for academic tasks but later answered in the affirmative to a more specific question regarding modes of use (thus revealing a contradiction in their responses), a pattern emerged. Most of these students identified as male, had an average age slightly higher than the overall sample and were enrolled in either a master’s or doctoral programme. This student cohort tended to be enrolled in programmes such as industrial and information engineering, computer science and ICT, and literature and the humanities. The students in this group reported receiving greater attention from professors on the subject of AI, as well as a high level of self-declared computer skills. Furthermore, they made greater use of digital devices and services. A significant proportion of these respondents indicated that they had used AI solely to “perform a minimal part of a task”; they expressed the view that universities should not prohibit the use of AI; and for them, AI would have a beneficial impact on information retrieval, writing, critical thinking and creativity. AI would affect the professional decisions of these individuals, but they appeared to be unconcerned about the broader societal implications of AI and its potential impact on employment.Footnote 4

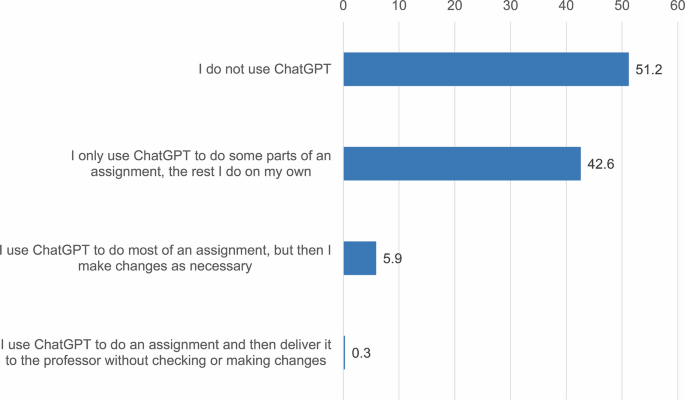

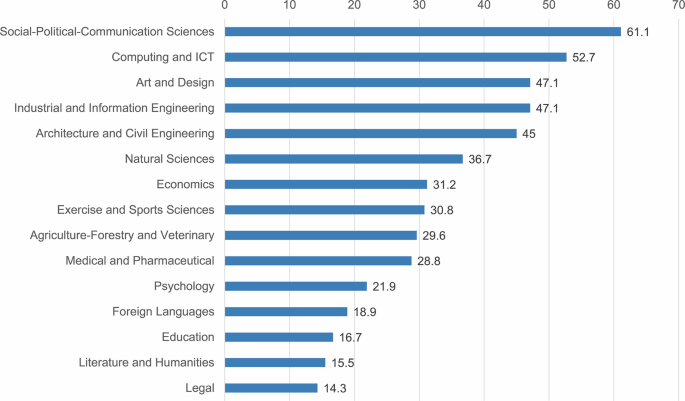

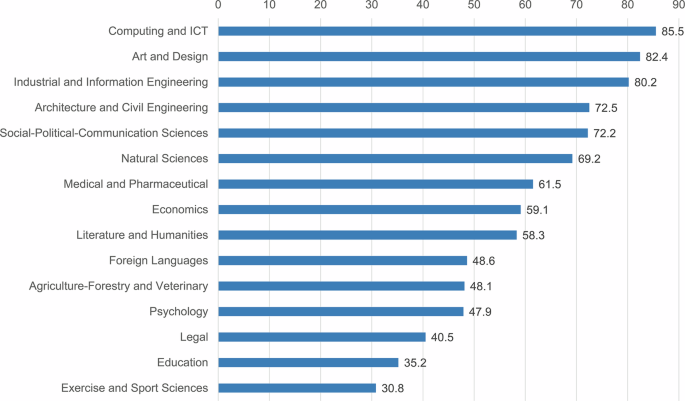

It might be assumed that the reason for the data indicating that half of the students are already using GenAI to perform academic tasks could be due to the use of a convenience sample in which most students were enrolled in computer science and engineering courses. This may be regarded as a reasonable assumption; however, some unexpected results emerged when the courses of study were isolated (Fig. 3). The highest proportion of students using AI for academic tasks was observed in the political, social and communication sciences (61.1%), followed by students enrolled in computer science and ICT courses (52.7%), as well as students in the industrial and information engineering, art and design, and architecture and civil engineering programmes. The students who used GenAI to a lesser extent tended to be in the humanities fields, including foreign languages, psychology, education and training, as well as law, literature and other related disciplines. The use of AI for university-related tasks was significantly associated with the course of study and exhibited a notable effect size, as estimated using Cramer’s V (Table 3).

I use or have used ChatGPT to help me with homework or exams (%).

The association between course of study and the use of AI “for personal projects, out of curiosity or just for fun” was even more pronounced. The results (Fig. 4) are more in line with expectations, with students in the fields of ICT and engineering declaring the highest percentage of use (85.5%), followed closely by those in art and design and industrial and information engineering courses. The fact that the use of AI for personal projects was more strongly associated (Table 3) with the course field (ϕc = 0.338) than the declared use for academic purposes (ϕc = 0.280) lends further support to the hypothesis that responses regarding the use of AI for university-related activities were partly underestimated due to social desirability.

I use or have used ChatGPT for my personal projects, out of curiosity or just for fun (%).

Students’ opinions about AI: Moral aspects and university regulation

The questionnaire comprised two sets of questions about students’ opinions, and participants were asked to indicate their level of agreement on a four-point scale (“strongly disagree” = 1; “slightly disagree” = 2; “slightly agree” = 3; “strongly agree” = 4). Table 4 contains all tested opinions, detailed results, means and standard deviations.

A focus on opinions about the morality of using AI for university-related activities and its relation to the purposes of education reveals that 29.5% of respondents expressed strong agreement with the statement “the use of ChatGPT to perform tasks and exams is morally wrong”. Furthermore, when the responses indicating slight agreement are included, over half of the total sample (58.3%) aligned with this perspective. This viewpoint was not significantly associated with gender but exhibited a positive correlation with the digital competence index (N = 1,366; τb = 0.095, p = 0.000).

A more detailed analysis of the data reveals an interesting effect size, as students who reported using AI for personal projects but not for academic tasks were more likely to view the use of AI for university-related activities as morally wrong, χ² (2, N = 1,366) = 50.480, p = 0.000, ϕc = 0.192. This finding aligns with our hypothesis that respondents may underestimate AI use for academic tasks. The data pertaining to the opinion “the use of ChatGPT by students defeats the purpose of education” are more nuanced: 15.7% of respondents indicated that they strongly agree, while 21.5% slightly agree (a total of 37.2%). In this case, a notable correlation emerged between gender and attitudes towards the use of GenAI. Specifically, females were significantly more inclined to believe that the deployment of this technology would be contrary to the basic purposes of education (Gender * “Students’ use of ChatGPT defeats the purpose of education”: Totally agree: male: 13.2%; female: 19%; other: 13%; slightly agree: male: 18.5%; female: 25.7%; other: 17.4% – χ² [2, N = 1,366] = 34.249, p = 0.000, ϕc = 0.112). Moreover, a negative correlation was observed between digital literacy and agreement with this viewpoint (τb = −0.113, p = 0.000). Age did not emerge as a significant factor.

In summary, a notable proportion of students considered the use of AI for academic tasks to be a form of dishonest conduct, which they perceived as having an adverse impact on the efficacy and intrinsic value of their studies, ultimately undermining the fundamental objectives of education. It is important to note, however, that a minority of students (11.1%) believed that the use of AI at university is not morally wrong and that it does not defeat the purpose of education (23.9%).

A significant majority of students concurred with the view that universities should regulate the use of GenAI and advocate for its conscious use: 81.4% of respondents indicated their agreement with this level of usage, with 37% expressing a strong preference. Conversely, a similar proportion of students (81%) asserted that, while AI tools may require regulation, universities should not prohibit their use. Males were particularly opposed to the idea of a university ban on AI (Gender * “The university should ban the use of ChatGPT”: Totally disagree: male: 55.2%; female: 38.9%; other: 47.8% – χ² [2, N = 1,366] = 44.899, p = 0.000, ϕc = 0.128). The same was true for younger respondents (τb = 0.088, p = 0.000) and those with the highest digital skills (τb = −0.143, p = 0.000).

Students’ opinions on AI: Effectiveness and reliability of AI tools

A majority of the sample (63.3%) believed that ChatGPT outputs could be mistaken for “human” (slightly agree: 51.7%; strongly agree: 11.6%). Nevertheless, the respondents expressed reservations about the accuracy of the outputs of AI (81.9%). This negative opinion about the reliability of ChatGPT outputs was expressed more frequently by males than females, and this association was statistically significant, although weak (Gender * “ChatGPT always generates accurate and reliable results”: Totally disagree: male: 46.2%; female: 35.8%; other: 47.8% – χ² [2, N = 1,366] = 24.858, p = 0.000, ϕc = 0.095). Furthermore, the perceived reliability of the results provided by these AI tools decreased significantly as the digital competence index increased (τb = −0.114, p = 0.000). This may be indicative of a correlation between greater use of AI and greater awareness of the current limitations of these tools. It is noteworthy that, for around a third of the sample (34.2%), the results of GenAI, which were deemed unreliable, were nevertheless perceived to be “better than what I can achieve on my own”.

Students’ opinions about AI: Future choices, fears and concerns

In terms of future expectations, the majority of students (87% overall, 35.8% expressing a strong conviction) believed that AI would become the new normal, and students with higher digital skills were more likely to hold this view (τb = −0.151, p = 0.000). Nevertheless, there was no indication that the advent of AI tools was perceived as a factor that could truly influence their choices. Only 23% of students indicated that AI was influencing their decisions regarding their future academic trajectory. A similar observation could be made regarding AI’s potential impact on students’ professional trajectory: only 6% of students indicated that they perceived this influence with clarity. It is noteworthy that these responses were significantly associated with gender, with females indicating less influence from AI on both their study path and professional future (Gender * “AI is influencing my choice of future study path”: Totally disagree: male: 47.8%; female: 58.9%; other: 52.2% – χ² [2, N = 1,366] = 21.943, p = 0.001, ϕc = 0.090; Gender * “AI is influencing the choice of career path I intend to follow after graduation”: Totally disagree: male: 44.5%; female: 57%; other: 52.2% – χ² [2, N = 1,366] = 30.074, p = 0.000, ϕc = 0.105). No correlations were observed with age.

Conversely, the influence of AI on educational and professional choices was found to increase alongside digital skills (Digital competence index * “AI is influencing my choice of future study path”: τb = 0.090, p = 0.000; Digital competence index * “AI is influencing my choice of future career path after graduation”: τb = 0.094, p = 0.000). It can thus be concluded that for more than three-quarters of the sample group, this prospective new normal has not been a factor influencing their decisions about their academic pursuits or their professional/career choices. Furthermore, half of the sample refuted this influence outright.

Moreover, the data consistently indicate low levels of concern regarding the potential impact of AI on one’s educational experience and future career prospects. The majority of respondents (77.9%) indicated that they were not concerned about the potential impact of AI on their education: only 6.9% expressed strong agreement with this concern. There was a slight increase in concern regarding the impact of AI on their future career (39.1%), with only 11.2% of students responding “strongly agree”. These low concerns were pervasive across the sample and not associated with gender-related effect sizes nor with age.Footnote 5 They were, however, negatively associated with the digital competence index (Digital competence index * “I am concerned about the impact of AI on my education”: τb = −0.106, p = 0.000; Digital competence index * “I am concerned about the impact of AI on my future career”: τb = −0.088, p = 0.000).

When the opinions in question pertained to more abstract and distant topics, two-thirds of the sample indicated a degree of concern: 66.1% of respondents indicated that they were concerned about the potential impact of AI on society, with 28% expressing a strong level of concern. This opinion was more prevalent among females than males (Gender * “I am concerned about the impact of AI on society in general”: Totally agree: male: 24.7%; female: 31.6%; other: 47.8% – χ² [2, N = 1,366] = 27.481, p = 0.000, ϕc = 0.100). Agreement with this view tended to decrease as digital literacy increased (Digital competence index * “I am concerned about the impact of AI on society as a whole”: τb = −0.098, p = 0.000). A comparable proportion of respondents anticipated that AI would result in the elimination of numerous occupations over the long term (68%, of whom 26.3% expressed strong agreement). There were no notable associations with gender on this point.

In general, as questions moved away from the personal and concrete towards the general and abstract, students’ concerns grew (and conversely). The fears highlighted by students concerned society in general (the impact of AI “on others”) rather than their personal experience. To gain further insight into this aspect, a concern index was calculated by summing the declared level of concern about the impact of AI on “my education”, “my future career”, “society in general” and “future of jobs”. The concern index is continuous (minimum = 4, maximum = 16) with a mean of 9.7635, a median and mode of 10, and a standard deviation of 2.88493. Table 5 shows all the questionnaire variables that were found to be significantly associated with the concern index.

Impact of AI on human capabilities

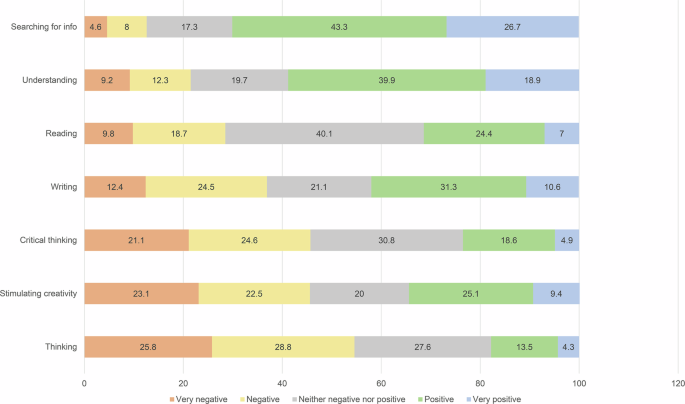

We broadened the scope of our investigation beyond the university context by including questions about the potential future impact of AI on activities and capabilities considered purely human. Respondents were invited to indicate their opinions on a five-point scale, with 1 indicating a very negative impact, 2 a negative impact, 3 neither a positive nor a negative impact, 4 a positive impact and 5 a very positive impact. The responses yielded a rather contradictory picture. The prevailing opinion among our students was that AI may have a negative effect on the capacity to think (M = 2.42, SD = 1.136) and on the specific process of critical thinking (M = 2.62, SD = 1.151). In contrast, the advent of AI should facilitate enhanced capabilities in searching for information (M = 3.8; SD = 1.065) and understanding complex concepts (M = 3.47; SD = 1.195). The data pertaining to the impact on the capacity to write (M = 3.03; SD = 1.217), read (M = 3; SD = 1.05) or stimulate creativity (M = 2.75, SD = 1.309) offered a set of results that was more nuanced and challenging to interpret.Footnote 6 No significant association was observed between these responses and gender. Conversely, as the digital competence index increased, the perception of a positive effect of AI on human capabilities also increased (Table 6).

As previously stated, the data in question fail to present a clear picture. One is compelled to consider the intrinsic contradiction between a negative effect on cognitive processes and a positive one on the ability to comprehend or retrieve information. These are concepts that, from a logical perspective, appear to be closely intertwined. Furthermore, at the time of the interviews, ChatGPT lacked direct internet access. Consequently, it operated on previously stored data, which rendered it less useful and current for information retrieval. It seems reasonable to suggest that these findings reflect the self-directed learning practices that have been already identified in the literature (e.g., Holmes and Tuomi, 2022; Puri and Baskara, 2023). When encountering topics explained in an unclear manner by teachers, these students requested assistance from AI to have the same topic explained and clarified more accessibly. The observed inconsistencies in the data may, in part, be attributed to the diffusion of similar practices. In any case, students’ perceptions of AI’s impact on the future of human capabilities exhibited neither a linear trajectory nor a clear inclination towards one of the two extremes under consideration (Fig. 5).

The impact of artificial intelligence on human abilities (%).

Professors’ behaviour (through students’ eyes)

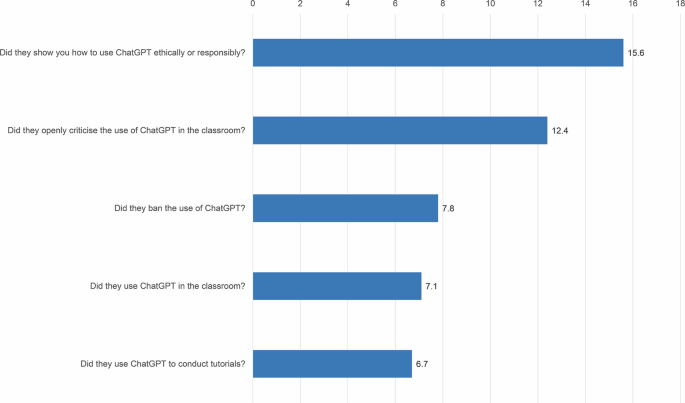

A group of five questions was included with the objective of verifying the behaviour of the teaching staff towards AI. The students were asked to indicate the extent to which their professors had used, censured/proscribed or elucidated the ethical and responsible use of ChatGPT in the classroom. As can be observed from Fig. 6, the responses pertaining to the use of ChatGPT in classroom were notably scarce (approximately 7% of the total). The behaviours associated with an “abstract attitude” by the teaching staff towards this topic, such as open criticism or the indication of ethical use, were present to a slightly greater extent.

In general, how did your university professors approach the use of ChatGPT (or similar tools)? (%).

The data indicate that at the time of the survey, 85% of professors, if not more, had not implemented any explicit type of behaviour related to GenAI tools. This is particularly relevant given that many of the interviewed students were enrolled in computer science and ICT courses or information engineering, which would entail exposure to these topics in their study plan.

A disaggregation of the data by course of study reveals some surprising insights (Table 7). The data regarding the use of AI in the classroom, the indication of how to use it ethically and open criticism (in white in the table) were statistically significantly associated with the course of study. In contrast, the use of AI and its prohibition were not statistically significant (columns in grey). What is particularly noteworthy is that the professors who are less inactive regarding AI were not those who teach in the fields that one might expect, such as computer science and ICT or information engineering. Instead, they are those who teach in political, social and communication studies, as well as art and design.

In conclusion, the data suggest that Italian university teaching staff are not particularly up-to-date in their approach to AI. During the period of our interviews, the vast majority of teaching staff did not implement any active behaviour related to AI, even in courses that would be expected to embrace these new tools. Furthermore, the most explicit manifestations of the teacher’s active engagement encompassed discussions, critiques and prohibitions but not the use of AI. This could be linked to the previously posed question (Table 3) concerning the extent of agreement with the opinion: “My professors DO NOT notice if I use ChatGPT”. In this case, 64.2% of students indicated that professors are aware when students employ GenAI (20.3% with certainty). This reinforces the significance of the issue of control over the use of AI, particularly with regard to its legality and suitability for use in academic institutions, as a crucial aspect emerging from these data.

link